In the world of social research and policy evaluation, we often obsess over a single, binary question: Does this intervention work?

We run pilots, conduct randomized controlled trials (RCTs), and look for statistical significance. Yet, we frequently encounter a frustrating paradox: a program that was a resounding success in one community fails miserably in another. Traditional methods often treat interventions as a “black box”—inputs go in, and outcomes come out, but the internal gears remain invisible.

If you work with complex social programs—whether in public health, education, or community development—you need a framework that embraces complexity rather than trying to control it away. Enter Realist Evaluation (RE).

Developed originally by Pawson and Tilley (1997), Realist Evaluation shifts the research question from “Does it work?” to something far more actionable: “What works, for whom, in what respects, to what extent, in what contexts, and how?”.

Here is a deep dive into the Realist Evaluation framework and why it is an indispensable tool for modern social research.

1. The Core Philosophy: Opening the Black Box

Traditional research (often rooted in positivism) tends to view interventions like a drug trial: pill A vs. placebo B. But social programs are not pills. They depend on human behavior, local culture, and dynamic relationships.

Realist evaluation is theory-driven. It assumes that programs don’t “cause” outcomes; people do. An intervention offers resources, but it is the stakeholders’ reasoning and reaction to those resources that determine the result .

RE seeks to unpack the “black box” to identify the underlying Generative Mechanisms—the invisible forces that explain why an intervention produces a specific outcome in a specific setting.

2. The Engine of Inquiry: Context-Mechanism-Outcome (CMO)

The heartbeat of Realist Evaluation is the CMO Configuration. To understand any social program, we must hypothesize and test the relationship between three components:

C – Context

Context is not just “location.” It refers to the conditions needed for a mechanism to fire. This includes:

• Social norms and culture (e.g., beliefs about parenting roles).

• Organizational structures (e.g., staffing levels, leadership support).

• Pre-existing relationships (e.g., trust between a community and a service provider).

Context explains where and when a program works.

M – Mechanism

This is the most misunderstood concept in RE. A mechanism is not the activity itself (e.g., “attending a workshop”). It is the underlying process that drives the outcome. A useful formula found in the literature is:

Mechanism = Resources + Reasoning

- Resource: What the program provides (e.g., a Family planning methods leaflet).

- Reasoning (Response): How the participant reacts (e.g., “I feel empowered to know different FP methods and choose as per my choice”).

Mechanisms are often hidden and unobservable, representing the cognitive or emotional shifts in participants.

O – Outcome

These are the results, which can be intended or unintended, qualitative or quantitative. Realist evaluation excels at spotting unintended outcomes because it looks at the whole picture. For example, a program might reduce family size (intended) but also accidentally reduce risk on women mental health due to unintended pregnancies (unintended).

3. How It Works in Practice: A Case Example

Let’s look at a practical example from dementia care research to see a CMO in action.

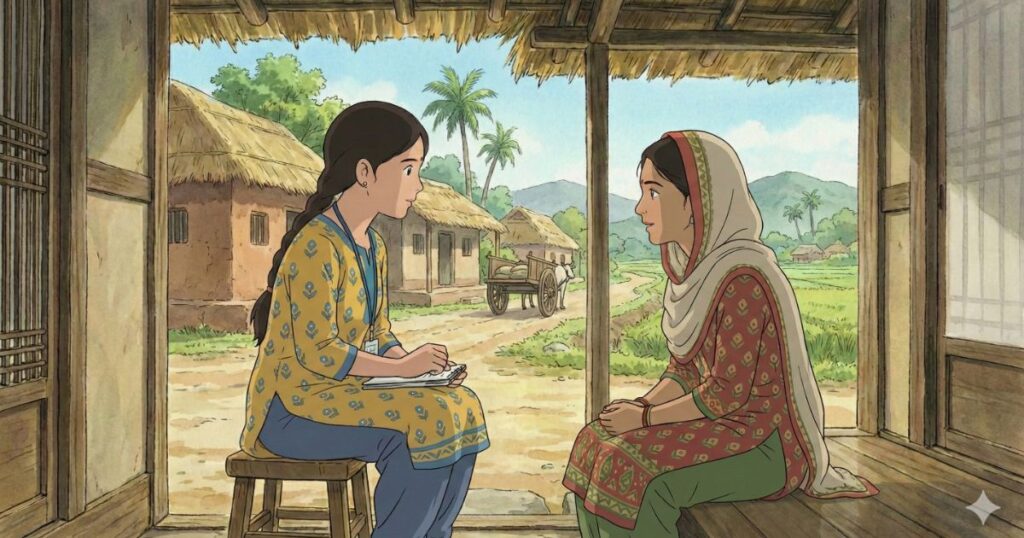

- The Intervention: A field facilitator counsels and guide people to get their desired FP method.

- Context (C): The support worker takes the time to get to know the person, their choice, family background and uses a person-centered counselling approach.

- Mechanism (M):

- Resource: The time and counselling provided.

- Reasoning: The beneficiary feels “understood” and gains clarity on their choice of method.

- Outcome (O): The beneficiary discloses specifically what help they need.

In a different context—say, where the worker is rushed or authoritative—the mechanism of “feeling understood” would not fire, and the outcome would likely fail, even if the “intervention” (the visit) technically took place.

4. Why Realist Evaluation is Critical for Social Research

If you are a researcher, evaluator, or program designer, here is why you should consider adding RE to your toolkit:

It Solves the “Transferability” Problem

Policymakers love to “scale up” successful pilots. Often, these scale-ups fail because the original success depended on a specific context that wasn’t replicated. RE produces Middle-Range Theory—theory that is abstract enough to apply across different settings but specific enough to be testable. It tells you exactly which contextual factors (e.g., staff trust, cultural alignment) must be present for the program to work in a new location.

It Addresses Inequality (The “For Whom” Question)

Social programs rarely benefit everyone equally. “Average effects” often hide the fact that a program works well for the privileged but fails the vulnerable. RE explicitly asks “for whom?”.

- Example: A diabetes program might work wonders for patients with high health literacy but fail those with low literacy. RE identifies the specific mechanism (e.g., ability to interpret complex data) that causes this disparity, allowing for targeted improvements.

It is Method-Neutral and Flexible

You don’t have to throw away your surveys. While RE leans heavily on qualitative methods (interviews are crucial for understanding people’s “reasoning”), it is method-neutral. You can use:

- Realist Interviews: A “teacher-learner” cycle where you present your theory to practitioners and ask them to refine it.

- Quantitative Data: To track patterns of outcomes across different contexts.

- Documentary Analysis: To understand organizational history and context.

It Embraces Failure as Data

In RE, a “failed” outcome is just as valuable as a successful one. If a mechanism didn’t fire, analyzing why (Was the resource missing? Did the context block it?) provides actionable intelligence to redesign the program.

Conclusion: From Judgment to Learning

The goal of social research should not just be to audit programs (pass/fail) but to improve them. Realist Evaluation transforms evaluation into a learning process. It moves us away from asking, “Did we hit the target?” to asking, “Did we understand the journey?”.

By respecting the complexity of human behavior and the critical role of context, Realist Evaluation provides the nuance needed to tackle wicked social problems. It allows us to build programs that are not just “evidence-based,” but context-sensitive and mechanism-driven.